One of the questions we get most often is: what actually happens when the Bee builds the map? People understand the broad strokes — cameras on vehicles, computer vision, data flowing into a dataset — but the mechanism by which a raw observation becomes a trusted map feature is, I think, genuinely interesting and underappreciated.

So we thought we'd take you through one concrete example. A single speed sign on a single street in San Francisco. We'll follow it from its first detection to the moment the map decides it's real — and then show you what happens after that.

Keep in mind: what you're about to see for one sign has to happen millions of times over, across every geography the Bee operates in, for every road sign, lane marking, turn restriction, and traffic signal in the dataset. The process is the same. The scale is what makes it hard.

The Sign

3rd Street at Mariposa. Dogpatch, San Francisco. The UCSF Medical Center on one side, a perforated metal facade on the other. A Muni light rail shelter with a lime-green pole. Palm trees. And bolted to a pole on the sidewalk — a white rectangular sign: SPEED LIMIT 30.

If you're an autonomous vehicle pulling speed limits from a map, this sign matters. If the map says 30 and the sign says 25, you have a problem. If the map says nothing at all, you have a bigger one. The question isn't whether someone mapped this sign once — it's whether anyone is still checking.

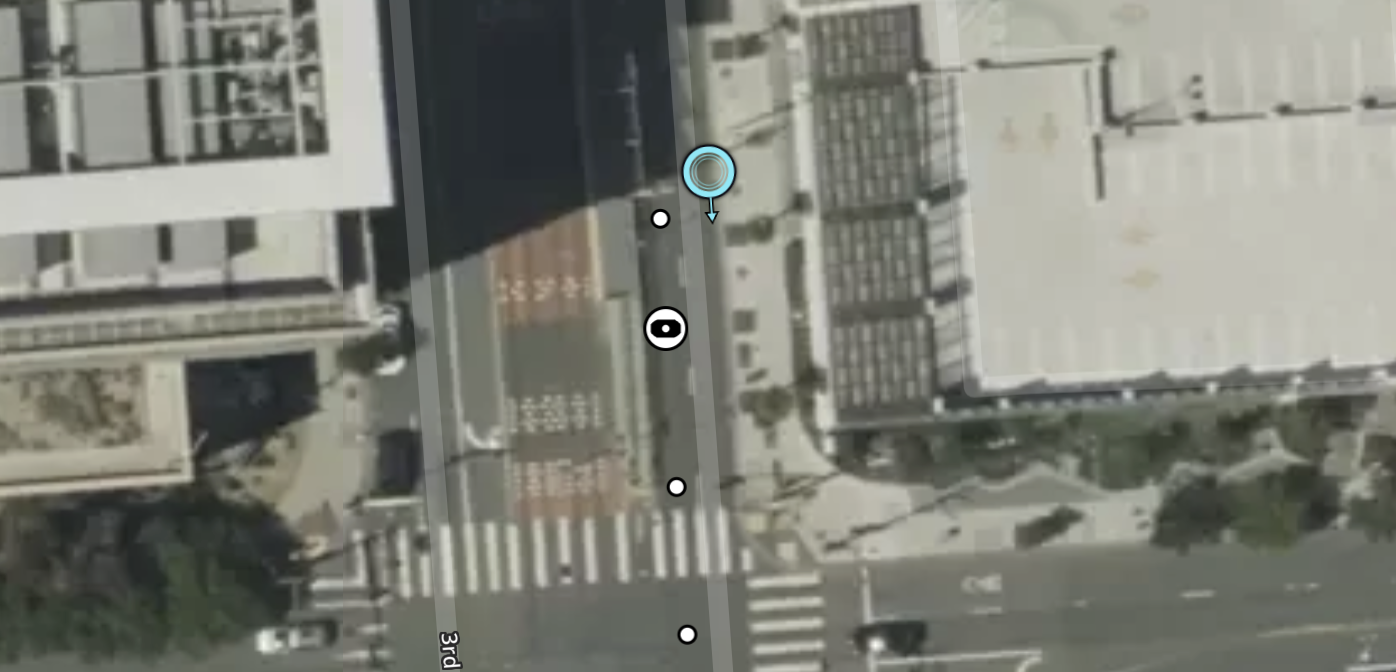

Dogpatch — 3rd Street at Mariposa

Metadata

Try this API query

June 13, 2025

A camera mounted to a windshield drives south on 3rd Street. Overcast. The kind of San Francisco morning where the sky and the concrete are the same color. The road ahead is empty — no traffic, no pedestrians, just the long straight corridor of 3rd Street vanishing into the fog. The Muni shelter sits to the left, its glass panels reflecting nothing.

The Bee catches the sign in one frame. One bounding box. The computer vision model reads the text, classifies the object, records the GPS position.

A new map feature is born.

Feature ID: 68508151d2982ddb15ac9393. Class: speed-sign. Speed limit: 30 mph. Type: regulatory. Confidence: low.

One camera. One frame. One claim. The dataset creates a record but doesn't commit — think of it as a pencil mark on a draft. The sign exists in the system, but the system isn't sure the sign exists in the world.

View metadata

Try this API query

curl -X POST "https://beemaps.com/api/developer/map-data?apiKey=<your-api-key>" \

-H "Content-Type: application/json" \

-d '{

"type": ["mapFeatures"],

"geometry": {

"type": "Polygon",

"coordinates": [[

[-122.3898, 37.7637],

[-122.3878, 37.7637],

[-122.3878, 37.7657],

[-122.3898, 37.7657],

[-122.3898, 37.7637]

]]

}

}'The First Witnesses

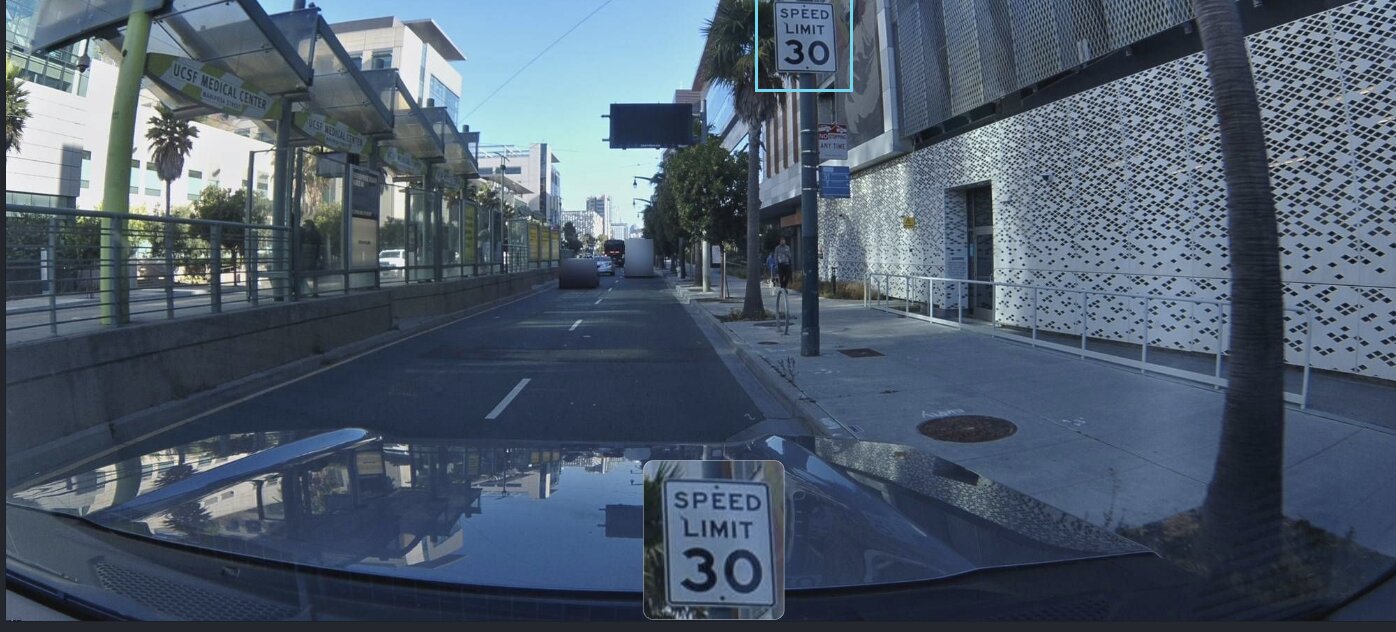

Five weeks later, a second device passes through. Different driver, different vehicle, different camera sensor. This time the sky is clear — sharp blue, hard shadows on the pavement, the medical center's white facade bright in the sun.

The second Bee reads the same three words. Records a GPS position within meters of the first. Two observers. Same conclusion. Still not enough.

Two weeks later, a third. The fog is back. You can see the rain spatter on the windshield, the wiper blade intruding from the left edge of the frame. The world looks nothing like it did on July 11 — but the sign looks exactly the same.

Three detections in five weeks. Not fast. But the system notices something: none of them disagree. Three independent observers, three identical conclusions. Same value, same position, same classification.

On July 25, the system makes its first real commitment. Six attribute changes in a single batch — refining the GPS position, locking in the speed value, confirming the sign type. The pencil mark gets traced in ink.

Look at this frame compared to the first one. June 13 was a gray void. Now it's a clear afternoon — the UCSF building reflected in the car's hood, the perforated facade casting geometric shadows on the sidewalk. Different time, different light, different camera.

Same sign. Same pole. Same three words.

This is how trust begins. Not with one perfect observation, but with multiple imperfect ones that agree.

The Build

August 2025. The detections accelerate — four more confirmations in eight days. August 8, 10, 13, 15. Each from a device running different firmware, which means different physical hardware. This isn't one Bee making the same claim repeatedly. These are independent witnesses.

The firmware versions matter. When a sign is detected by devices running v1.4.2, v1.5.0, v1.5.1, and v1.6.0, you know these aren't the same camera with the same calibration error. If one model's optical distortion consistently misread a 3 as an 8, you'd expect other models to disagree. They don't. Four different sensor packages, four identical readings: SPEED LIMIT 30.

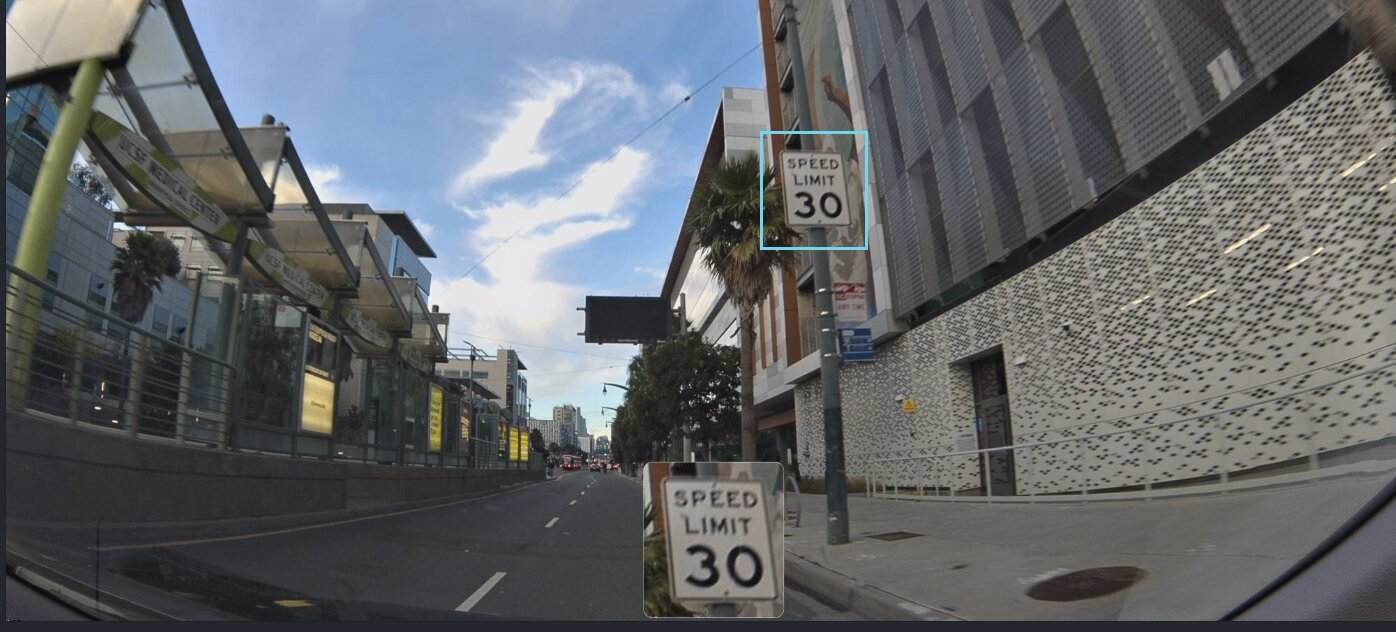

By mid-August, seven different devices have independently confirmed the sign. Seven different moments, seven different dashcams, seven GPS fixes clustered within a few meters of each other on the west side of 3rd Street.

Notice how close this camera is. The sign nearly fills the inset crop — you can read the serifs on the numerals. Earlier detections caught it from 50 meters out. This one is practically beside it. The system doesn't care about the distance. It cares that a seventh independent observer arrived at the same conclusion as the first six.

The evidence is accumulating.

September 23, 2025

Three months and ten days after the first detection. The system runs its consensus algorithm — a calculation that weighs the number of independent observations, the consistency of their classifications, the spatial agreement of their GPS positions, and the diversity of the devices that produced them.

The result: Swarm Consistency Reached.

Look at this frame. It's an ordinary Tuesday. A box truck rumbles south in the lane ahead. A cyclist rolls past the perforated facade on the right. The UCSF Medical Center sign is legible on the transit shelter. And on the pole — the same white rectangle. SPEED LIMIT 30. The system doesn't know it's a Tuesday. It doesn't know about the cyclist or the truck. It only knows that seven independent cameras have now agreed, across three months and every weather condition San Francisco can produce, that this sign says 30.

This is the moment the map says: I believe this.

The classification is locked. The position is confirmed. This isn't a single camera's opinion anymore — it's a consensus formed by a swarm of independent observers.

Hours later, a different vehicle passes the same sign. An orange traffic cone sits on the sidewalk next to a utility box — some maintenance crew was here and left. The shadows are longer. None of this matters to the algorithm. What matters is that detection number eight just landed, and it agrees with the other seven.

No human reviewed this sign. No cartographer drove to 3rd Street with a clipboard. No government agency uploaded a dataset. A network of cameras, mounted to ordinary vehicles driven by ordinary people, independently and repeatedly observed the same physical object — and arrived at the same conclusion. The map built its own confidence.

The Map Doesn't Stop

Here is the part most people don't expect.

The sign is verified. Consensus reached. You might assume the system files it away — marks it done, stops looking.

It doesn't.

In the five weeks between September 26 and November 1, sixteen more detections land. Sixteen additional independent confirmations of a sign the map already trusts.

October brings traffic. The earlier frames were mostly empty road, but now there are vehicles in both lanes — a dark SUV ahead, a sedan pulling alongside on the right. The sign peeks out above the roofline. The Bee reads it anyway. Detection number eleven. Still 30.

Why keep checking? Because the physical world changes. Signs get replaced. Speed limits get lowered for construction, then raised again. A sign that was there last month might be a blank pole next month. A sign that said 30 might say 25. The only way to know is to keep looking.

Mid-October. The palms throw long shadows across the lanes. Two white SUVs are ahead. The medical center is in the background. A sticker on the lower-left corner of this windshield — a different fleet vehicle, a different company, a different reason for being on 3rd Street. Same sign. Same reading.

Every post-consensus detection serves two purposes. First, it re-confirms the sign still exists. Second, it would catch the change if the sign were removed, replaced, or altered. If a device saw "SPEED LIMIT 25" where 30 used to be, the system would flag the disagreement. The consensus would weaken. Eventually, the map would update.

Late October. A white Tesla is pulling to the curb on the right, just past the sign. The palm tree trunk casts a line across the inset crop. The sign is partially obscured by the car — but the Bee's bounding box still catches it. Still 30.

A few days later. Rain. You can see it on the windshield — the spatter, the slight haze. A red Muni bus glows through the drizzle on the left. A cargo van lumbers ahead. This is as far from the clear September consensus day as weather gets in San Francisco. The sign doesn't care about the weather. Neither does the Bee.

Detection nineteen. SPEED LIMIT 30.

November 1. Overcast. The electronic transit sign above the road is lit up with route information. An SUV is ahead. The Bee is close to the sign now — the bounding box is large, the crop is crisp. Less than five months after the first detection, the sign is still here. Same pole. Same position. Same three words.

Twenty-three detections in under five months. Every single one says the same thing.

The map knows.

The Receipts

Twenty-three detections. Thirteen different firmware versions. Under five months.

| # | Date | Event |

|---|---|---|

| 1 | Jun 13, 2025 | First detection |

| 2--3 | Jul 11--25, 2025 | 2 confirmations + attribute refinement |

| 4--7 | Aug 8--15, 2025 | 4 confirmations in 8 days |

| --- | Sep 23, 2025 | Swarm Consistency Reached |

| 8--15 | Sep 26--Oct 12, 2025 | 8 post-consensus detections |

| 16 | Oct 14, 2025 | Continued verification |

| 17 | Oct 16, 2025 | Continued verification |

| 18 | Oct 19, 2025 | Continued verification |

| 19 | Oct 21, 2025 | Continued verification |

| 20 | Oct 23, 2025 | Continued verification |

| 21 | Oct 25, 2025 | Continued verification |

| 22 | Oct 28, 2025 | Continued verification |

| 23 | Nov 1, 2025 | Most recent detection |

Seven detections before consensus. Sixteen after — all within five weeks. The map doesn't stop checking just because it's sure.

What This Means

Every road sign on every street in every city goes through this same process. Not because a cartographer scheduled it — because someone drove to work.

The 75,000+ Bee devices deployed globally aren't mapping on purpose. They're commuting, delivering, patrolling, servicing. The mapping is a side effect of driving. And every pass feeds the same consensus engine: detect, classify, confirm, re-confirm.

Traditional maps are snapshots. A surveyor visits a corridor, records what's there, and moves on. The data is accurate on the day it's collected. Six months later, a construction crew replaces a sign — and the map is wrong until someone visits again.

A swarm doesn't visit. It inhabits. The same roads get driven every day by different vehicles, and every drive is a fresh observation. A map that's re-confirmed every few days doesn't just tell you what was true last spring. It tells you what's true now — and it would tell you the moment that changes.

This sign on 3rd Street hasn't changed since June. Twenty-three cameras confirmed it. That's a fact worth knowing, and the only way to know it is to never stop checking.

23 Cameras, One Sign

You drove past it a thousand times. Twenty-three cameras read it for you.

And now the map knows.