About Us

Physical AI requires something the internet can't provide: fresh video and structured data about the world. We built the full stack — from the sensor to the API — to deliver it.

Our Scale

The Opportunity

Why video changes everything

Language models learned to write by reading the internet. But the physical world doesn't fit in text. A single minute of video contains depth, motion, lighting, occlusion, object relationships — spatial and temporal context that no written description can capture. One dashcam drive through a city teaches more about how the physical world behaves than a thousand pages of text about it.

Text describes the world. Video is the world — every frame a lesson in how physical reality works.

A four-year-old has never read a physics textbook, but they understand gravity, permanence, and how people and vehicles move through space. They learned all of this just by watching — continuous visual observation of the real world. Physical AI needs the same kind of training data: not descriptions of roads, but video of roads. Not text about how cars behave at intersections, but millions of hours of cars actually behaving at intersections.

Our Hardware

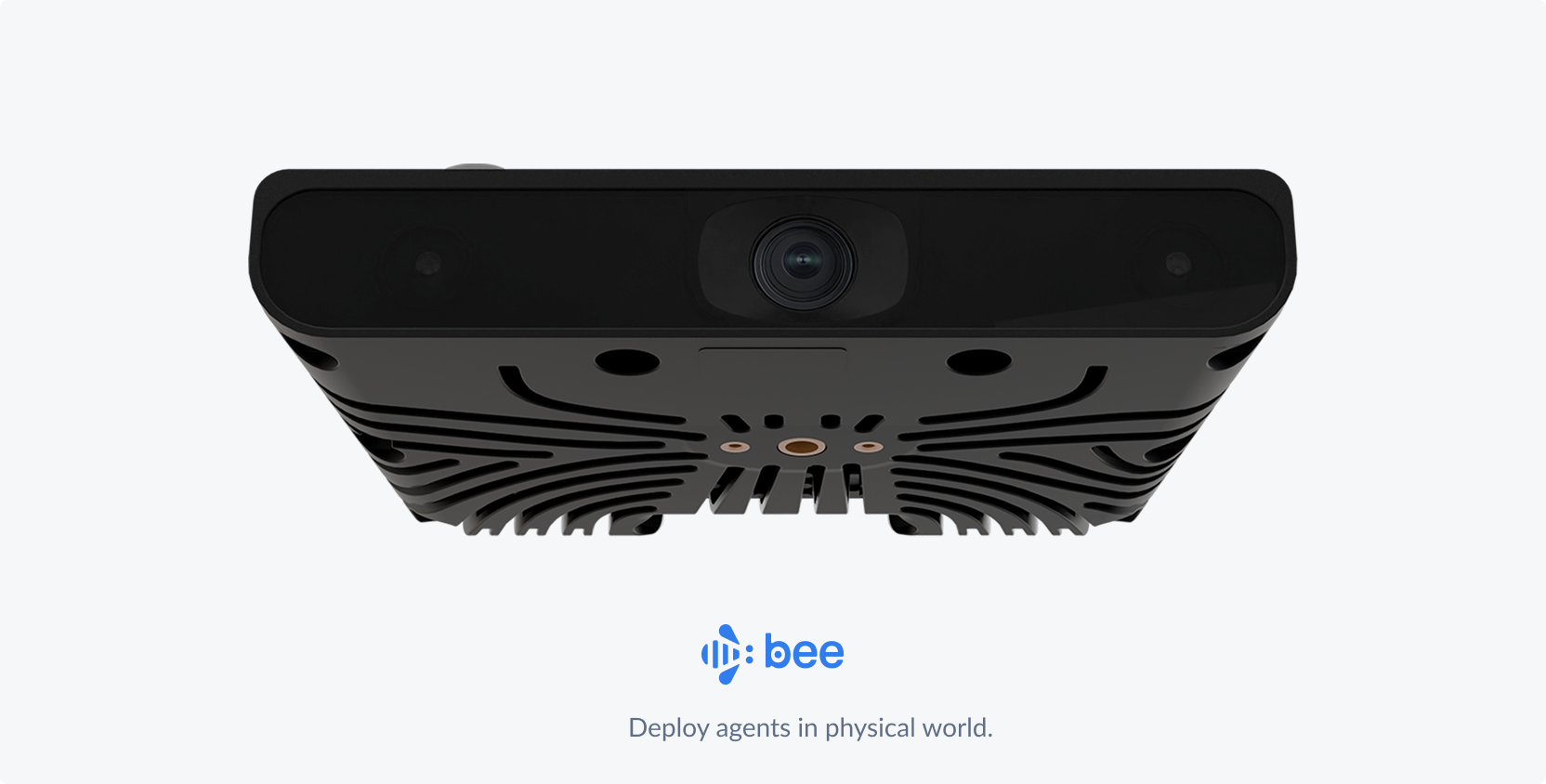

The Bee

If you want the right data, you have to build the right instrument. The Bee is a programmable sensor platform designed from scratch to capture and structure data from the physical world.

The hard part of building a sensor isn't any single component — it's getting all of them to work together in conditions you didn't anticipate. We chose every element of this device — optics, IMU, GPS, onboard compute — to serve one objective: maximizing the quality of structured spatial data under real-world constraints.

This is our fourth generation. Each version was shaped by what the previous one taught us — billions of images worth of failure modes that don't show up in simulation.

Validated the distributed collection model with off-the-shelf sensors

Purpose-built lens and sensor stack for mapping-grade imagery

On-device ML for real-time frame scoring and selective upload

LTE connectivity, advanced IMU fusion, and hardened enclosure for global deployment

The Platform

From lens to API

We built every layer of the technology stack — from the software running on cameras in contributors' vehicles to the APIs that serve data for physical AI use cases and beyond.

Products

What we offer

Overview

What is Bee Maps?

Bee Maps captures fresh street-level imagery, map features, and AI event videos from the world's largest decentralized sensor imagery network — and delivers them through APIs. Purpose-built Bee cameras in 100+ countries passively collect data that flows through ML pipelines into structured, queryable layers.

Who it's for

- Mapmakers — continuously updated imagery and road features for map production

- Autonomous vehicle developers — millions of hours of geotagged driving video for world model training

- Fleet operators — AI-powered safety monitoring, real-time tracking, and dashcam intelligence

- AI/ML teams — large-scale, geotagged video datasets and structured road data for physical AI

What makes it different

- Hours of latency, not months — continuous collection by a distributed network means data is fresh, not stale

- Full-stack ownership — we built every layer from the camera optics to the API, ensuring quality end-to-end

- Decentralized scale — 37% global road coverage from contributors in 100+ countries, growing 5x faster than Google Street View

- On-demand mapping — Burst lets you request fresh data for any location and pay only when it's captured

Trusted by

Enterprise customers include HERE Technologies, Lyft, NBC Universal, Mapbox, Volkswagen, and other leading mapping, automotive, and mobility companies.